Knowledge Graph

From data modeling to knowledge graphs

Published: November 24, 2025

Share this post

Data modeling has been the practice of adding business meaning to data for over thirty years. It involves mapping database entities to end-user vocabulary, identifying relationships across entities so they can be combined meaningfully, and creating the metrics and measures that decision-makers care about. The goal is to transform raw bits and bytes into actionable insights for the business.

We see that real-world databases can easily consist of 100s of tables and 1000s of columns, with a significant number of them being dimensions or measures. These kinds of schemas are likely to require considerable cleaning, semantic mapping, relationship building, and other nuances to curate them into various sets of data models for different business use cases. This is a complex and time-consuming process that involves a significant amount of human effort and should ideally be automated.

Building this context manually requires significant effort. BI engineers must understand both the data and the business requirements; they must continually add new context for different users and questions, often duplicating work as new needs arise. And because there is no systematic way to accumulate or consolidate this context over time, it remains fragmented.

However, creating this knowledge graph is challenging; production databases are large, making the resulting graph complicated. The process must be automated to minimize manual work; however, the graph still needs to be accurate and useful for business questions. The knowledge graph is essentially a cleaner, business-friendly abstraction layered on top of messy, system-level tables and columns. Achieving this requires LLM-powered semantic interpretation of every part of the data and schema.

The Tursio platform addresses this problem by automatically inferring a knowledge graph from underlying databases. The goal is to construct a global view that supports all required data models. In fact, our earlier version of this graph was called the “Large Data Model”; today, we refer to it more aptly as a semantic knowledge graph, reflecting its deeper semantic interpretation.

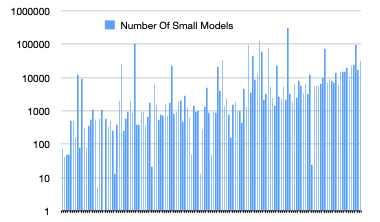

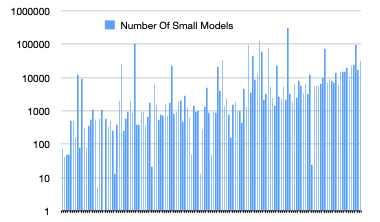

The figure below shows the number of small models trained in Tursio to build the semantic knowledge graph for each of the 137 real-world databases. We see 10s to 100s of thousands of small models needed to accurately capture the semantics on real-world databases. Together, these small models capture name simplifications, join relationships, table and column descriptions, ontologies, default measures, aspects, aliases, PIIs, and so on.

Knowledge graphs, on the other hand, are better suited for large database schemas and for situations where data models need to be dynamic, as new questions or new business users emerge constantly. In these scenarios, many different data models are possible. As more data and requirements accumulate, manual modeling becomes increasingly tedious and unsustainable.

Real-world Complexities

To understand the complexities and effort involved in data modeling, we analyzed the schemas of 137 real-world databases that connected to the Tursio platform over the last year. We counted the number of tables, the total number of columns, and the number of columns identified as dimensions and measures, respectively. The figures below show the distribution.

We see that real-world databases can easily consist of 100s of tables and 1000s of columns, with a significant number of them being dimensions or measures. These kinds of schemas are likely to require considerable cleaning, semantic mapping, relationship building, and other nuances to curate them into various sets of data models for different business use cases. This is a complex and time-consuming process that involves a significant amount of human effort and should ideally be automated.

Manual Context Building

Zooming out, data modeling is essentially the process of building a rich business context on top of raw data. This context is what business users rely on to make sense of the information. Today, practitioners create this business context (i.e., data models) manually and on demand, based on the questions or insights business users are seeking.Building this context manually requires significant effort. BI engineers must understand both the data and the business requirements; they must continually add new context for different users and questions, often duplicating work as new needs arise. And because there is no systematic way to accumulate or consolidate this context over time, it remains fragmented.

Automatic Context Generation

Instead of assembling the context manually through piecemeal data modeling, modern enterprises require a global view of their data that can be leveraged by all users for all questions. Such a knowledge graph should:- Subsume all data models that business users require.

- Dynamically provide the right data model as context for each user question.

- Reduce the manual effort and time spent building individual data models.

- Unblock business users, enabling faster insights and actions.

However, creating this knowledge graph is challenging; production databases are large, making the resulting graph complicated. The process must be automated to minimize manual work; however, the graph still needs to be accurate and useful for business questions. The knowledge graph is essentially a cleaner, business-friendly abstraction layered on top of messy, system-level tables and columns. Achieving this requires LLM-powered semantic interpretation of every part of the data and schema.

The Tursio platform addresses this problem by automatically inferring a knowledge graph from underlying databases. The goal is to construct a global view that supports all required data models. In fact, our earlier version of this graph was called the “Large Data Model”; today, we refer to it more aptly as a semantic knowledge graph, reflecting its deeper semantic interpretation.

The figure below shows the number of small models trained in Tursio to build the semantic knowledge graph for each of the 137 real-world databases. We see 10s to 100s of thousands of small models needed to accurately capture the semantics on real-world databases. Together, these small models capture name simplifications, join relationships, table and column descriptions, ontologies, default measures, aspects, aliases, PIIs, and so on.

Pros and cons

Data models work well when schemas are small and the model is static, meaning it serves a fixed set of questions. In such cases, only a few models are needed, and they can be created manually. Classic examples include a handful of KPI dashboards that rarely change.Knowledge graphs, on the other hand, are better suited for large database schemas and for situations where data models need to be dynamic, as new questions or new business users emerge constantly. In these scenarios, many different data models are possible. As more data and requirements accumulate, manual modeling becomes increasingly tedious and unsustainable.

Conclusion

Businesses need to be data-driven, and access to data is essential for all decision-makers. Data modeling has been the traditional way of providing that data piece by piece; however, it has proven to be slow and insufficient. Automating the translation of data to business users via knowledge graphs can accelerate that process by dynamically pulling the required context globally. Tursio is taking that leap by inferring knowledge graphs and leveraging them to answer business questions. This is just the beginning of a new era of productivity with much more to come.Bring search to your

workflows

workflows

See how Tursio helps you work faster, smarter, and more securely.