Generative AI

From 80% to 100%: The Accuracy Marathon in Enterprise AI

Published: Oct 28, 2025

Share this post

When organizations evaluate AI solutions, accuracy often becomes the headline metric. But what those numbers don’t reveal is the story behind them: the people, processes, and persistence required to transform an engine from “good enough” to exceptional. This is critical since most AI tools struggle with accuracy and are anywhere between 50-80% accurate out-of-the-box, which is typically insufficient for production deployments. In this blog, we describe our war story of overcoming this gap, i.e., going from 80% to 100% accuracy in a production environment. In our experience, this journey is less about the technology itself and more about the collaboration that fuels its growth.

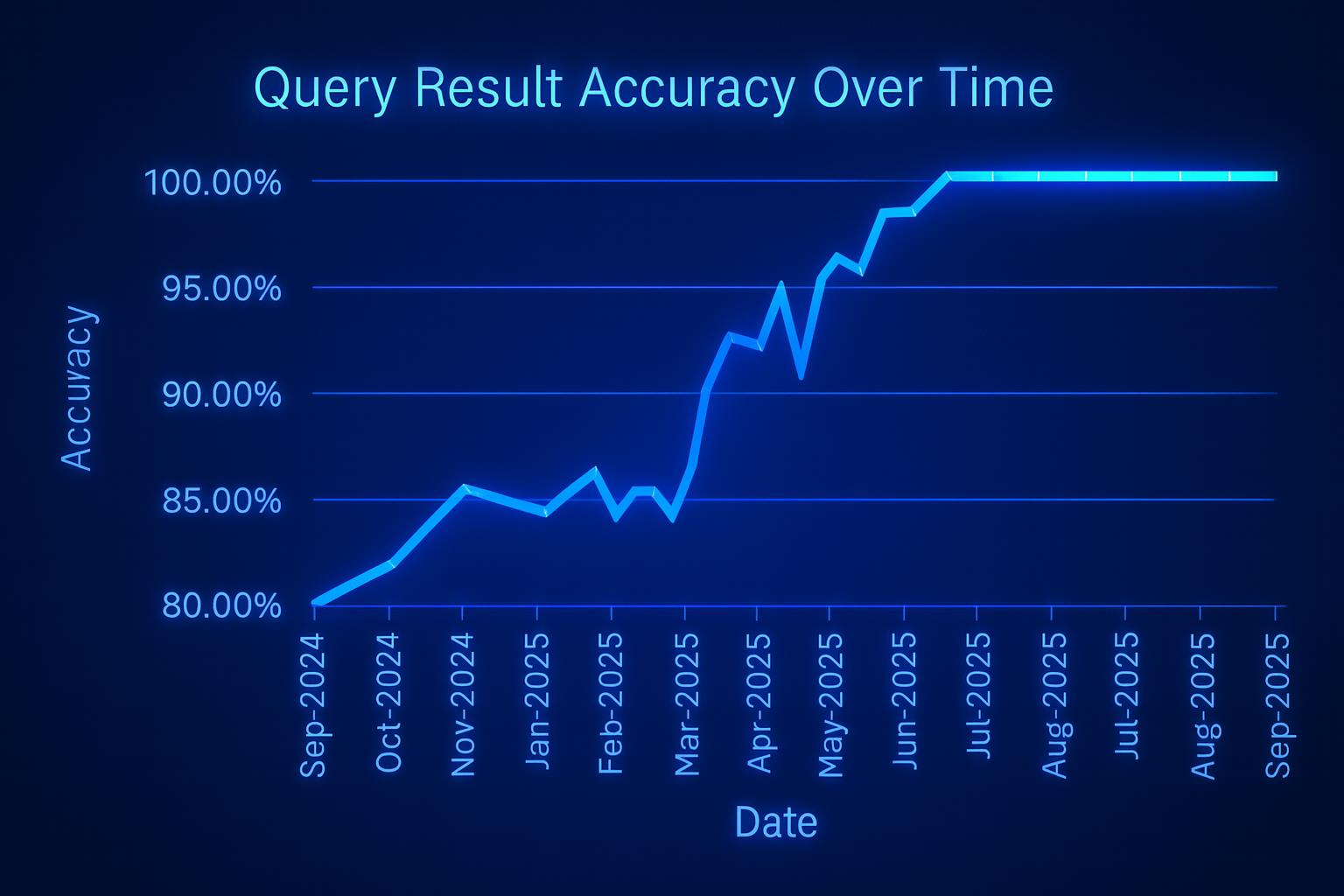

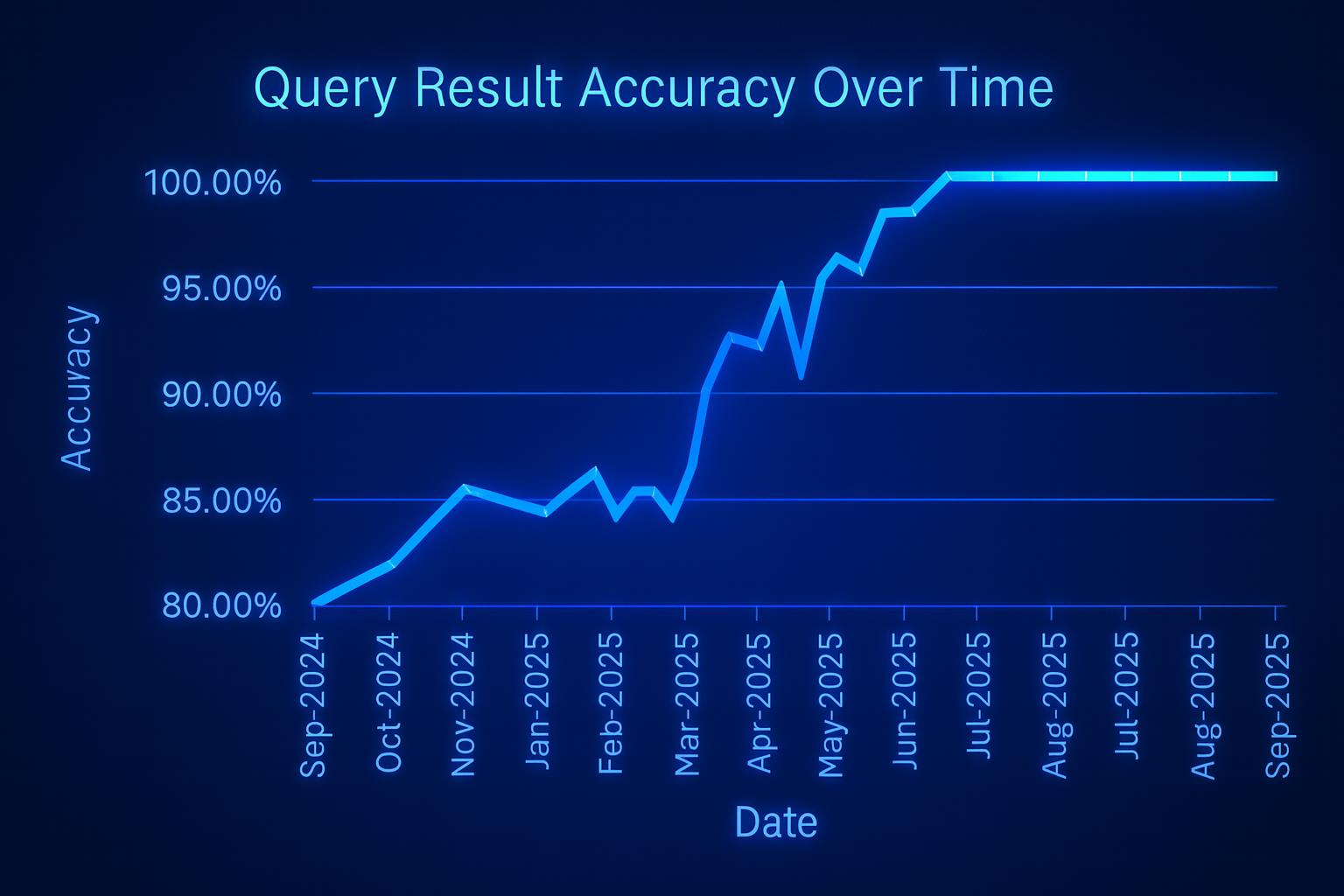

Over the past year, our team worked with a leading home warranty provider in the U.S. to embark on this journey. What began with an accuracy rate of ~80% in our out-of-the-box solution, eventually evolved into a sustained 100% accuracy based on human evaluation, something many say is impossible in enterprise AI, yet it is often critical before the AI can be trusted for deployment. The road wasn’t instant. It’s a story of constant feedback, iteration, and collaboration.

A simple chart of this trajectory illustrates more than numbers; it shows a steady commitment to progress. But numbers alone don’t explain how we achieved these results. The real transformation happened in the day-to-day collaboration between our team and the customer.

By converting every user interaction into a data point, we built a living feedback ecosystem, one where the AI and its users co-evolved in real time.

This structured feedback formed the heartbeat of our improvement cycle. Alongside daily feedback, our teams held bi-weekly check-ins to review aggregated insights, spot patterns, and refine rules. Based on feedback, we added business rules to constrain the responses and cover the gaps, changed them to reflect business nuances, replaced them when outdated approaches no longer fit, and removed them when they introduced confusion. This adaptability kept the system evolving in real time with our customer’s needs.

This approach ensured that improvement wasn’t reactive but proactive—anticipating needs and fine-tuning accuracy before issues surfaced.

What started as reactive troubleshooting turned into a proactive, almost intuitive partnership. We could anticipate where new rules might break old logic, where language variations might trip up accuracy, and how to structure feedback for faster iteration.

It is important to note that the process wasn’t limited to a single use case. We tested and refined across multiple categories that our customer was serving, proving that the approach scaled across their business. Even more categories are slated for deployment soon, promising further impact. With every cycle, queries became more consistent, and responses aligned closely with our customer’s policies. Over time, accuracy climbed steadily, culminating in a sustained 100% over the past two months!

The journey from 80% to 100% accuracy wasn’t a single breakthrough, it was about collaborating with the customer. For enterprises, the answer is clear: AI success requires investment beyond technology. It requires people, process, and patience. When those align, the results can be extraordinary and replicable across categories, use cases, and industries.

Over the past year, our team worked with a leading home warranty provider in the U.S. to embark on this journey. What began with an accuracy rate of ~80% in our out-of-the-box solution, eventually evolved into a sustained 100% accuracy based on human evaluation, something many say is impossible in enterprise AI, yet it is often critical before the AI can be trusted for deployment. The road wasn’t instant. It’s a story of constant feedback, iteration, and collaboration.

The Numbers Tell a Story

At the outset, the Tursio AI engine stood at about 80% accuracy. With steady work, we saw improvements:- 85% within a few months

- 90% as feedback loops matured

- 95% as business rules and refinements stacked up

- 100%, sustained over the past two months

A simple chart of this trajectory illustrates more than numbers; it shows a steady commitment to progress. But numbers alone don’t explain how we achieved these results. The real transformation happened in the day-to-day collaboration between our team and the customer.

Behind the Scenes: How We Got Here

The real story lies in the process, not just the outcome. Once the initial model was implemented in the customer environment , it provided a strong baseline from which to build. Every query from the human agent was met with a feedback opportunity. As soon as an agent searched for an answer, they were prompted to fill out a short form explaining whether the response was correct. If it wasn’t, they were required to provide context, i.e., “My business constraints are <this>, but the engine said <that>. ” This ensured that feedback was not just a simple yes or no but turned into highly actionable insights that directly guided improvements to the model.By converting every user interaction into a data point, we built a living feedback ecosystem, one where the AI and its users co-evolved in real time.

This structured feedback formed the heartbeat of our improvement cycle. Alongside daily feedback, our teams held bi-weekly check-ins to review aggregated insights, spot patterns, and refine rules. Based on feedback, we added business rules to constrain the responses and cover the gaps, changed them to reflect business nuances, replaced them when outdated approaches no longer fit, and removed them when they introduced confusion. This adaptability kept the system evolving in real time with our customer’s needs.

This approach ensured that improvement wasn’t reactive but proactive—anticipating needs and fine-tuning accuracy before issues surfaced.

Lessons We Learned Along the Way

In the beginning, those weekly syncs with our customer felt slow and out of rhythm. Each meeting was a deep dive into dozens of feedback forms and examples, many of which pointed to subtle inconsistencies that weren’t easy to fix. There were moments where progress felt incremental, where we’d spend an hour dissecting why the engine misinterpreted a single clause in a policy. But over time, something shifted. We developed a rhythm, a clear process that everyone trusted. The customer would spot the issue, we’d discuss it live, trace it back to a root cause, propose a fix, implement it, and test the result by the next meeting. It became almost second nature: identify, convey, resolve, validate.

What started as reactive troubleshooting turned into a proactive, almost intuitive partnership. We could anticipate where new rules might break old logic, where language variations might trip up accuracy, and how to structure feedback for faster iteration.

It is important to note that the process wasn’t limited to a single use case. We tested and refined across multiple categories that our customer was serving, proving that the approach scaled across their business. Even more categories are slated for deployment soon, promising further impact. With every cycle, queries became more consistent, and responses aligned closely with our customer’s policies. Over time, accuracy climbed steadily, culminating in a sustained 100% over the past two months!

What This Means for Enterprise AI

The above story highlights a truth that every enterprise considering AI must recognize: AI is not a switch to be flipped, but rather a journey to be undertaken. The true differentiator lies in how teams operationalize learning, not just how they build models. Accuracy at launch is just the starting line. It takes a village of customers, customer success teams, product leaders, engineers, and many others to play a role. The process of building, testing, and refining requires commitment. When done right, the results are transformative. With persistence, AI can deliver not only higher accuracy but also consistency and trust in mission-critical workflows.The journey from 80% to 100% accuracy wasn’t a single breakthrough, it was about collaborating with the customer. For enterprises, the answer is clear: AI success requires investment beyond technology. It requires people, process, and patience. When those align, the results can be extraordinary and replicable across categories, use cases, and industries.

Bring search to your

workflows

workflows

See how Tursio helps you work faster, smarter, and more securely.